It is well known that Monad is more powerful than Applicative Functor. Using the Monad methods you can implement the Applicative ones, to the point that in recent GHC versions we have

with equivalence laws

and default implementation

There are many good examples of Applicatives that are not, and can not be, Monads, like Validation and ZipList

But the question I was asking myself these days:

If we have an Applicative that is also a Monad, is there any reason to prefer

<*>overap

Developing some intuition

From the Monad laws above we know that in fact they produce the same result, but could important performance differences exist?

Comparing the Monad and Applicative minimal implementations, we can expect some kind of performance difference. After all, with >>= the continuation function has to create the monadic context dynamically:

The right argument to >>= has type Monad m => a -> m b, so it has to create the monadic context during execution. On the other hand, for Applicative, the output context is fully defined by the “program” not by the evaluation of the function:

When we do ma >>= f, f is in charge of creating the final monadic context. But f :: a -> m b doesn’t have access to the original context it is being chained to. So the new context gets created with no knowledge of the original one.

On the other hand, when we do f k <*> f a the <*> operator itself is in charge of creating the output applicative context, and it does so with access to the initial one. In that way, there is opportunity for optimizing the creation of the output context.

Based on this intuition, let’s try to find an example in a monad where creating the output context could be optimized with the extra knowledge.

An array Monad

Regular Haskell Arrays, in Data.Array are not monads or applicatives. They are too powerful, allowing for arbitrary index values. For example, let’s take the right identity law for monads

as will have certain index values, but we have no way to make return create an array with the same index values as has, for all as. There is no way to satisfy the law.

But we can create our own, much simplified, 1D array that can in fact be turned into a Monad. Let’s write the most basic array, in fact using Haskell’s Data.Array as a backend:

data Arr a = Arr {toArray :: !(Array Integer a)}

fromList :: [a] -> Arr a

fromList [] = error "No empty arrays"

fromList as = Arr $ listArray (0, genericLength as - 1) asWe can only create these arrays from a list. These are terrible arrays, performance is going to be awful, but that’s not the point.

Now we provide instances for Functor, Applicative and Monad.

Nothing fancy there, the usual unwrapping and wrapping and delegating to Data.Array’s implementation.

For the <*> to behave similarly to lists, we want to apply every function on the left to every value in the right argument array. We can use list comprehension and the fact that Data.Arrays are Foldable so they provide toList. Since we are at it, we make our Arr also Foldable

instance Foldable Arr where

foldMap f = foldMap f . toArray

instance Applicative Arr where

pure = fromList . pure

fs <*> as = fromList [f a | f <- toList fs, a <- toList as]Again, this is going to be horrible performance, we turn the arrays into lists, then use the lists for the cartesian product, and finally turn the resulting back into an Arr. The key here is that we only create a single Arr, since we know the applicative contexts on the left and right, we know exactly what the size of the resulting array will be, and we can just construct it.

On the other hand, when we make Arr a Monad

There is no way around it, each call to f creates a new Arr, and finally we need to create yet another big array. Data.Array is strict on the indexes, this is more work that for the Applicative case.

Benchmark

Let’s run the same operation using both the Applicative and the Monad. The fantastic criterion library can be used to get some numbers.

applicativeWork :: (Applicative f, Foldable f, Num a) =>

f a -> a

applicativeWork as = sum $ (+) <$> as <*> as

monadWork :: (Monad f, Foldable f, Num a) =>

f a -> a

monadWork as = sum $ ((+) <$> as) `ap` asWe sum the results of a cartesian product, both on the applicative context, using <*>, and on the monadic context using ap. And now we drive it with criterion’s defaultMain and a reasonable size. As a control case, we do the same for lists.

main = do

let n = 500 :: Int

l = [0..n]

as = fromList l

defaultMain [

bgroup "array" [

bench "applicative" $ nf applicativeWork as

, bench "monad" $ nf monadWork as]

,bgroup "list" [

bench "applicative" $ nf applicativeWork l

, bench "monad" $ nf monadWork l]

]

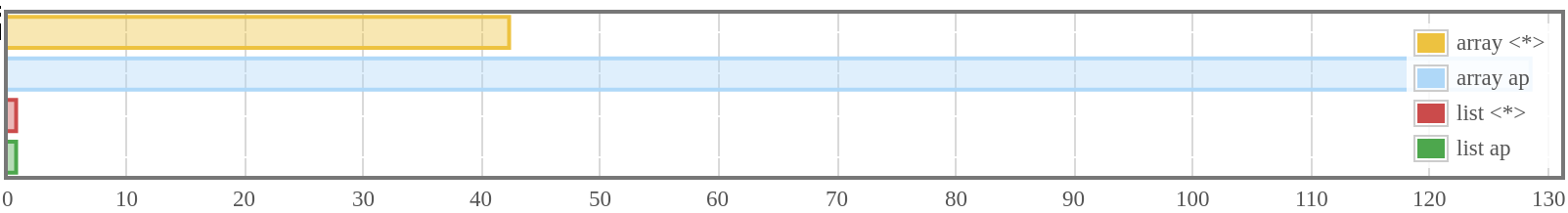

We see for Arr the Applicative is around three times faster than the Monad, while for lists, times are exactly the same.

So, that’s one reason to prefer <*> over ap, for some monads the former can be vastly more efficient.

Code

You can find all the source for this post on GitHub